what is wa and how is it calculated

Question: wa usage

When you run top, you can see the usage of wa. What does it means and how it calculated?

1 | Cpu(s): 0.1 us, 2.4 sy, 0.0 ni, 93.6 id, 3.9 wa, 0.0 hi, 0.0 si, 0.0 st |

Values related to processor utilization are displayed on the third line. They provide insight into exactly what the CPUs are doing.

usis the percent of time spent running user processes.syis the percent of time spent running the kernel.niis the percent of time spent running processes with manually configured nice values.idis the percent of time idle (if high, CPU may be overworked).wais the percent of wait time (if high, CPU is waiting for I/O access).hiis the percent of time managing hardware interrupts.siis the percent of time managing software interrupts.stis the percent of virtual CPU time waiting for access to physical CPU.

In the context of the top command in Unix-like operating systems, the “wa” field represents the percentage of time the CPU spends waiting for I/O operations to complete. Specifically, it stands for “waiting for I/O.”

The calculation includes the time the CPU is idle while waiting for data to be read from or written to storage devices such as hard drives or SSDs. High values in the “wa” field may indicate that the system is spending a significant amount of time waiting for I/O operations to complete, which could be a bottleneck if not addressed.

The formula for calculating the “wa” value is as follows:

wa %=(Time spent waiting for I/O / Total CPU time)×100

Also we can use vmstat 1 to watch the wa state in system, but it is the not the percent:

1 | vmstat 1 |

1 | Procs |

In summary, a high “wa” value in the output of top suggests that your system is spending a considerable amount of time waiting for I/O operations to be completed, which could impact overall system performance. Investigating and optimizing disk I/O can be beneficial in such cases, possibly by improving disk performance, optimizing file systems, or identifying and addressing specific I/O-intensive processes. We can use iotop to find the high io or slow io processes in system:

1 | Current DISK READ: 341.78 M/s | Current DISK WRITE: 340.53 M/s |

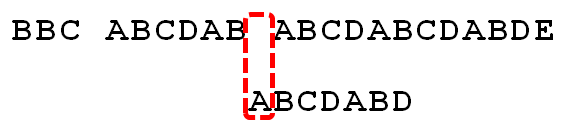

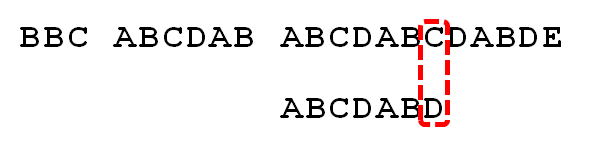

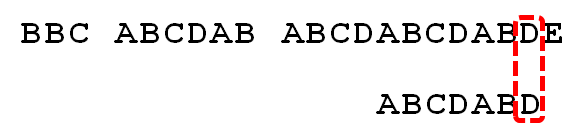

Code tracing

From strace the top command, the wa value is get the status from /proc/*/stat. The /proc/*/stat is wrote by the proc ops and get the iowait time from kernel_cpustat.cpustat[CPUTIME_IOWAIT] accroding to the code fs/proc/stat.c.

1 | static const struct proc_ops stat_proc_ops = { |

In the Linux kernel, the calculation of the “wa” (waiting for I/O) value that is reported by tools like top is handled within the kernel’s scheduler. The specific code responsible for updating the CPU usage statistics can be found in the kernel/sched/cputime.c file.

One of the key functions related to this is account_idle_time().

1 | /* |

This function is part of the kernel’s scheduler and is responsible for updating the various CPU time statistics, including the time spent waiting for I/O. The function takes into account different CPU states, such as idle time and time spent waiting for I/O.When The blocked threads with waiting for I/O, the cpu time accumulated into CPUTIME_IOWAIT.

Here is a basic outline of how the “wa” time is accounted for in the Linux kernel:

- Idle time accounting: The kernel keeps track of the time the CPU spends in an idle state, waiting for tasks to execute.

- I/O wait time accounting: When a process is waiting for I/O operations (such as reading or writing to a disk), the kernel accounts for this time in the appropriate CPU state, including the “wa” time.

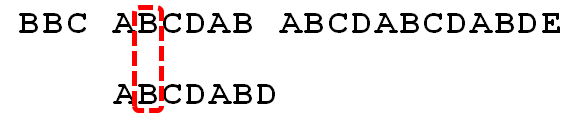

1 |

|

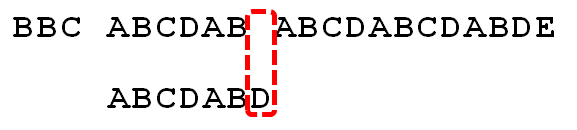

1 |

|

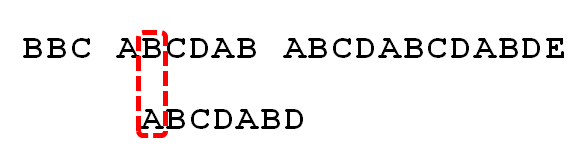

1 |

|

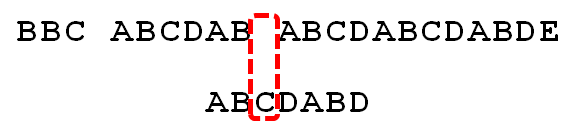

1 |

|

1 | static int |